In modern infrastructure, performance is no longer limited by CPU cores or GPU availability alone. Increasingly, the real constraint is power. Electrical ceilings, circuit balancing, thermal limits, and cooling capacity now determine how much compute can be safely deployed per rack or cluster.

Yet most environments still treat power as a static facility metric rather than a programmable resource.

To increase virtualization density and AI throughput, power must move from the electrical room into the orchestration layer.

Enterprises frequently provision servers and GPUs based on theoretical maximum capacity. In practice, workloads rarely align with static assumptions. Some virtual machines are idle while others spike. AI training jobs surge unpredictably. GPU clusters may be underutilized simply to avoid tripping power thresholds.

Without real-time, workload-aware power telemetry, infrastructure teams compensate by overprovisioning. This reduces risk but wastes capacity.

The result is stranded compute.

Increasing density requires more than faster processors. It requires intelligent power fabric.

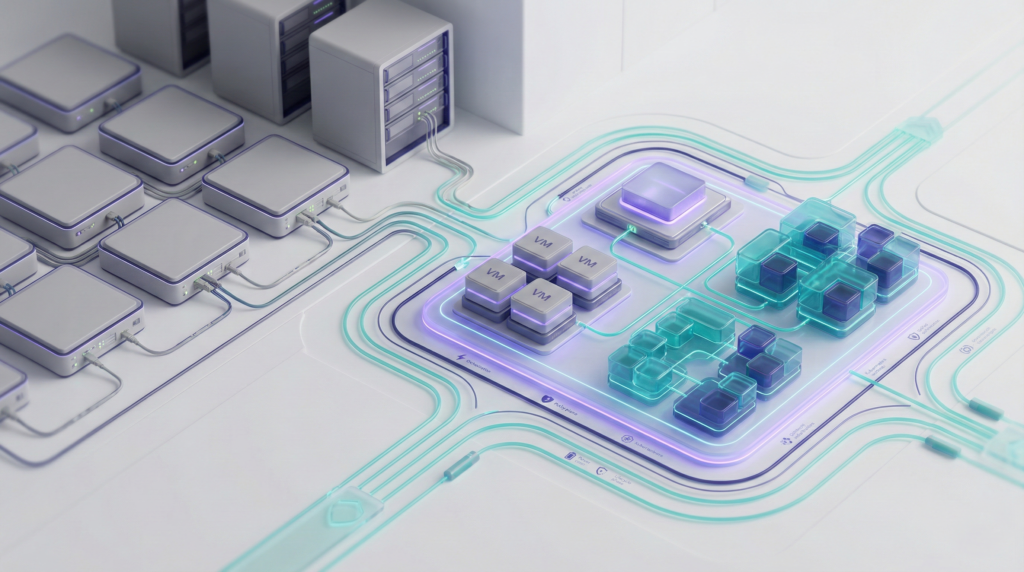

Karios PowerLink embeds power visibility directly into the server power supply. Rather than relying on external meters or aggregated rack-level estimates, PowerLink provides real-time, per-node telemetry integrated into Karios Core.

Because power awareness is native to the Infrastructure Operating System, orchestration decisions can account for actual energy draw alongside CPU, memory, and storage metrics.

This enables:

In smaller server deployments, this translates into materially improved virtualization efficiency without expanding electrical infrastructure.

For high-density environments, particularly those using NVIDIA GPUs, Karios Kinetic extends this intelligence across up to 48 power connections within a rack or cluster.

AI workloads are uniquely power-intensive and burst-driven. Training runs can push circuits toward maximum draw, forcing conservative provisioning strategies. Karios Kinetic provides granular, multi-circuit visibility and control that allows operators to approach true power limits safely.

With integrated telemetry and orchestration:

The outcome is increased AI throughput within the same physical footprint.

The connection between watts and workloads is direct. Every inefficiency in power management reduces usable compute. Every unnecessary safety margin limits density.

By integrating power as a managed resource within the Infrastructure Operating System, Karios transforms energy from a constraint into an optimization variable.

Virtualization density increases because workload placement reflects actual electrical capacity. AI performance scales because orchestration understands real-time power draw. Cooling demand stabilizes because consumption is predictable rather than reactive.

An intelligent power fabric aligns electrical reality with workload intent. It bridges the gap between facilities management and IT operations. It allows enterprises to deploy more compute, run more AI jobs, and maximize hardware investment without expanding datacenter footprint.

In an era where power availability defines competitive advantage, increasing compute is no longer about adding servers.

It is about orchestrating energy with precision.

From watts to workloads, infrastructure performance begins with intelligent power control.